Summary

AI has quickly become part of the language of network observability. Many vendors across the observability landscape can describe, summarize, correlate, or explain some data or situation, leveraging basic LLM capabilities. At a distance, many of these offerings sound similar. They promise faster insight, efficient operations, and a more intelligent path through rising complexity. But the industry has reached a point where surface-level similarity is creating noise, not value. As agentic systems become more common, the industry needs a better definition of a quality solution to cut through the noise.

Introduction

The best question is not whether a solution can produce an answer in natural language or wrap a simplistic prompt around a familiar operational workflow. It is: What should qualify as a good agentic system in an environment where networks are more dynamic, more distributed, and more business critical than ever? AI data center growth is exploding at the same time that cloud expansion, hybrid architectures, digital dependencies, and escalating operational expectations are making network operations harder to manage with legacy approaches. Before we debate feature lists, we need a clearer standard for what good actually looks like.

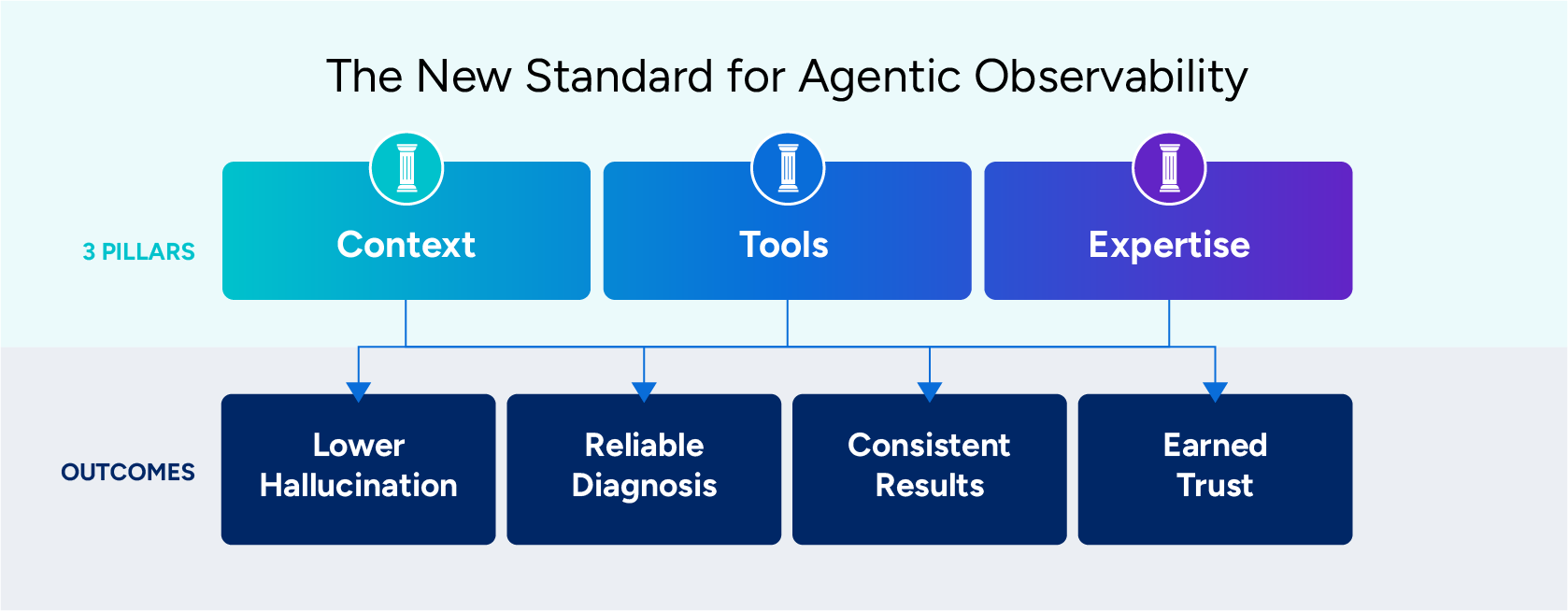

Agentic systems for network observability must meet the following criteria:

- Assembles sufficiently complete, high-quality, critical operational data to drive context from a customer’s specific environment

- Uses tools in a disciplined way to verify and enrich that context

- Applies codified network engineering, architecture, and operations expertise to guide reasoning and direct or approve action

These are the standards that matter in today’s environment, given that networks can no longer be treated like a commodity, because they aren’t. Good does not mean fluent or potentially capable in the abstract. Good means grounded, disciplined, and guided by real operational expertise that leads to an ever-increasing level of trust.

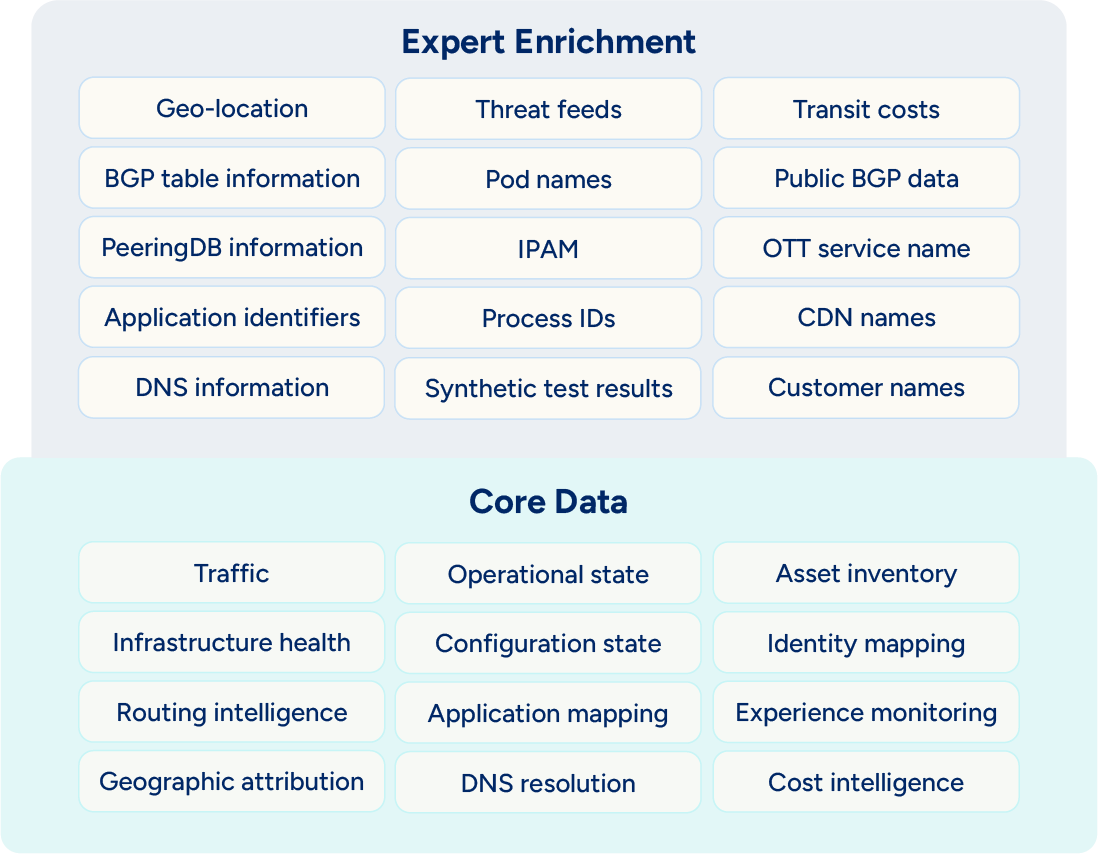

The right data, at the right time, in the right place, drives the best context

To start exploring the criteria above, the first place to start is always with data. The best system does not work from isolated signals, a partial snapshot, or stale data. It assembles a picture of the environment with broad data and complete, trustworthy enrichment, enough to support real diagnosis and real action. That is a much higher bar than simply collecting telemetry or presenting a visual dashboard.

In practice, systemically-critical context means the system can understand topology and dependencies, correlate metrics, logs, and events, account for configuration and change history, and incorporate both cloud and on-premises infrastructure state. It should also recognize incident history, policy context, and the operational relationships that determine whether an observed issue is local, downstream, transient, or systemic. This is not just a matter of data diversity or volume, but of relevance, structure, and quality. A system that sees many disconnected signals is still operating with blind spots. A system that can assemble those signals into an operational picture is working at the level required for credible understanding.

This is where many AI experiences in observability remain incomplete. They can explain a graph, summarize an alert, or restate a symptom, but they often do so without enough environmental context to support a confident conclusion. In modern networks, the difference between a symptom and a root cause often depends on relationships that are not visible in a siloed tool, domain, or moment in time. Good starts with context that is complete and trustworthy enough to support decisions, not just descriptions.

All of this is not to say maxing out a context window is the answer. While having access to the most timely and relevant data in one platform is optimal, all data should not be haphazardly passed to the model. Recent research from Anthropic suggests that bloated context windows can lead to performance and cost issues, as well as accuracy issues in recalling information from them.

Ubiquitous tool use cannot solve data blind spots

Even a strong contextual foundation from relevant data is not enough on its own. In a live operating environment, unknowns remain. Conditions change in real time. Data may be incomplete, stale, or ambiguous. A good agentic system must be able to verify what it does not yet know, enrich its context with live evidence, inspect specific items or states, and support bounded operational actions where appropriate.

But tool use only adds value when it is governed. An agentic system that never verifies is limited, makes assumptions, and is most likely incorrect. A system that uses tools indiscriminately is problematic, leading to excess latency, increased costs, and degraded result quality. Good tool use is selective, purposeful, and risk-aware. It operates within clear approval boundaries and understands that every action, even an investigative one, has operational consequences. Balanced tool use is necessary because each tool call adds latency, cost, and the expansion of potential failure surfaces.

In network observability, this matters because environments are rarely static. A recommendation that is plausible at one moment can become wrong after a routing change, a cloud configuration update, or a sudden traffic shift. Disciplined tool use allows the system to close evidence gaps before it overcommits to a diagnosis or action.

This is one of the clearest distinctions between an effective agentic system and a superficial one. A superficial system generates an answer from whatever context it happens to have at hand. An effective system knows when the current evidence is insufficient, knows how to gather more, and does so in a bounded and intelligible way. Balanced tool use is neither absent nor uncontrolled; it is governed verification in service of better decisions and outcomes.

Domain expertise matters

The third requirement is codified engineering and operations expertise. A production-ready system cannot rely on model reasoning alone. It must embed the logic and discipline that experienced network and operations teams have built over time. That includes network engineering knowledge, architecture patterns, operational heuristics, runbook logic, escalation rules, and approval boundaries.

While a rock-solid foundation in relevant data is key to context, that context cannot be created without an expert understanding of that data, combined with codified engineering and operations expertise through prompts. Production-ready systems cannot rely on model reasoning alone. It must embed the logic and discipline that experienced network and operations teams have built over time. That includes network engineering knowledge, architecture patterns, operational heuristics, runbook logic, escalation rules, and approval boundaries.

This requirement is essential because operational credibility is not the same as general intelligence. A model may be able to generate a technically plausible answer, but plausibility is not the same as fitness for use in a real environment. Production settings require more than language fluency or broad pattern recognition. They require an understanding of how networks actually behave, how operators investigate uncertainty, when certain symptoms are likely to be secondary effects, when an escalation path should begin, and where automated action should stop without human approval.

Codified expertise is what turns a generally capable system into one that behaves credibly under operational pressure. It shapes how the system prioritizes evidence, interprets ambiguity, and decides whether an action is appropriate. Connecting relevant data for context requires domain expertise, and this is the difference between prompt engineering and context engineering for network operations. Without this layer, the system may be perceived as smart while missing the domain-driven realities that determine whether its guidance is useful or risky. The only acceptable agentic behavior is one that is shaped by expertise that has been made explicit and operationalized, not left to improvisation.

These three requirements matter most because they reinforce each other. Relevant data-supported context without verification can leave dangerous blind spots. Tools without discipline can produce noise, delay, and unnecessary risk. Relying on simple prompting without expertly curated context is generic and unuseful. Reasoning without all three can still seem persuasive, even if it misses something important. That is one of the most important points for the industry to understand. The best agentic system is not defined by one capability in isolation. It is defined by how data, context, and expertise work together to bound uncertainty.

From criteria to outcomes

The criteria discussed shape the outcomes that determine whether an agentic system is useful in real operational environments. When a system is grounded in curated context, guided by codified domain expertise, and disciplined in how it verifies and acts, four outcomes follow: lower hallucination risk, improved diagnostic reliability, more consistent deterministic-like behavior, and greater operational trust. Together, these outcomes show how strong context, disciplined reasoning, and embedded operational knowledge translate into systems that are safer, more reliable, and more credible in practice.

Lowering hallucination risk

In operational settings, hallucination is not only a matter of factual invention. It also includes unsupported conclusions, hidden assumptions, and overconfident reasoning across evidence gaps. That risk grows when systems are forced to guess. It grows when they lack environmental context. It grows when they do not verify unknowns. It grows when they interpret operational signals without the benefit of domain-specific constraints.

A context layer with expertly curated data and shaped by deep domain expertise reduces the need to guess in the first place. Disciplined tools reduce uncertainty by verifying what is missing or ambiguous. Codified expertise reduces overreach by shaping how the system reasons and what it is allowed to conclude or do. The goal is not to pretend uncertainty disappears. No operational system should claim that. The goal is to approach zero unsupported reasoning by reducing blind spots and making evidence gaps visible. The better grounded and better governed the system is, the less room it has to invent what it does not know.

Improving reliable outcomes

The second outcome is improved diagnostic reliability. Modern network environments are full of distributed dependencies, overlapping fault domains, noisy signals, real-time changes, and multiple plausible causes. In that reality, reliable diagnosis depends on more than a quick correlation or a statistically possible answer. It depends on whether the system can build the right hypotheses, test them against the environment, prioritize the most meaningful evidence, and distinguish symptoms from causes.

A system with stronger context sees more of the environment that shapes the problem. Disciplined verification can confirm or disprove hypotheses before presenting them as conclusions. Embedded operational expertise can better recognize patterns, apply escalation logic, and weigh the significance of different signals. Together, those capabilities improve the odds that the system reaches the right answer for the right reason. Reliable diagnosis depends on how well the system sees, checks, and interprets the environment, not on how confidently it communicates.

Heading toward more deterministic-like results

The third outcome is more consistent and deterministic. This is an important distinction because consistency in AI is often treated as a model issue when it is more accurately a system issue. Variability is not only a product of stochastic language generation, it is also a product of incomplete context, inconsistent evidence gathering, unbounded reasoning, and unclear action rules.

More consistent outcomes come from reducing those sources of variance. When the system has fewer evidence gaps, follows required verification steps, reasons within codified expert guidance, and operates within bounded action and approval rules, the path from observation to conclusion becomes more stable. The result is not strict determinism in the classical sense, but it is deterministic-like in the way that matters operationally. Similar problems are approached with similar methods. Similar evidence is weighed through similar standards. Similar actions are governed by similar boundaries. Consistency improves when the system is designed to reduce variance in how conclusions are formed and how actions are taken.

Autonomy will not be achieved without trust

The final outcome is operational trust. Trust is often treated as a branding claim, but in practice, it is a system result. It is earned through repeated exposure to grounded reasoning, evidence-backed conclusions, visible verification, bounded authority, and consistent behavior. Users do not trust systems because of marketing. They trust systems that prove, over time, that they can function with precision within the realities of the environment.

That is especially true in network observability, where consequences are rarely confined to a single component or team. Trust depends on the belief that the system understands enough of the environment to be useful, checks enough of the evidence to be credible, and respects enough operational discipline to be safe. It is the cumulative result of architecture, process, and behavior. Trust in agentic systems is earned when systems repeatedly show and maintain a record of how they reason, verify, and act within the constraints that real environments impose.

The standard should be higher

As agentic systems become more common, the industry needs a better definition of quality. In network observability, good cannot mean merely fluent or perceived as somewhat capable. It is not an interface that appears modern while the reasoning beneath it remains shallow, unverified, or operationally naive. It must be sufficiently grounded, appropriately verified, and shaped by operational expertise.

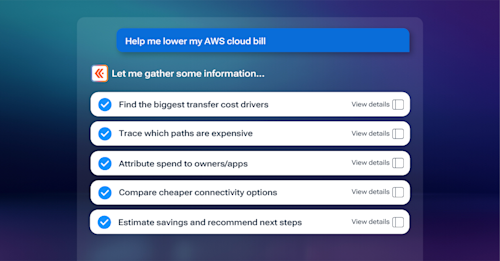

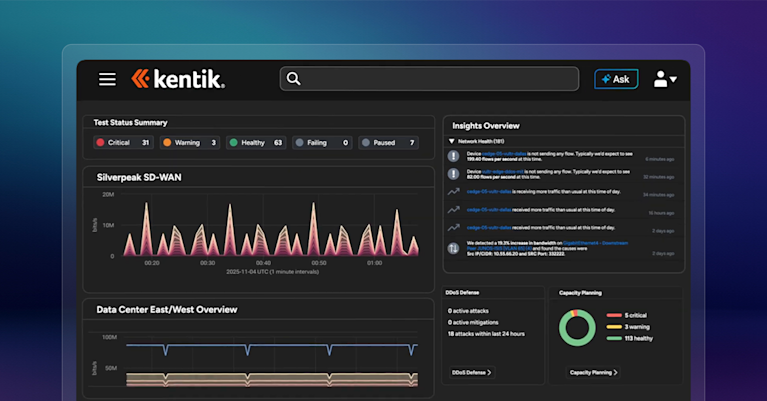

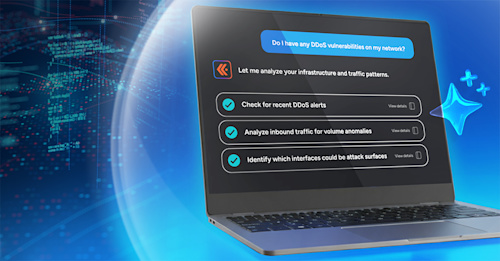

This higher standard is also where real differences across platforms are revealed, and network intelligence transforms beyond network observability. The Kentik Network Intelligence Platform and AI Advisor don’t simply add AI to existing data views:

- They provide a context layer grounded in expertly curated data and informed by collective decades of deep domain expertise

- They leverage disciplined tool use with proficiency to close evidence gaps

- They apply codified network architecture, engineering, and operations expertise to guide reasoning and action.

This combination lowers hallucination risk, improves diagnostic reliability, creates more consistent outcomes, and earns the operational trust that is setting the standard.