Cloud DDoS Detection and Protection: How Cloud-Scale Analytics Defends Modern Networks

Cloud DDoS Detection and Protection at a Glance

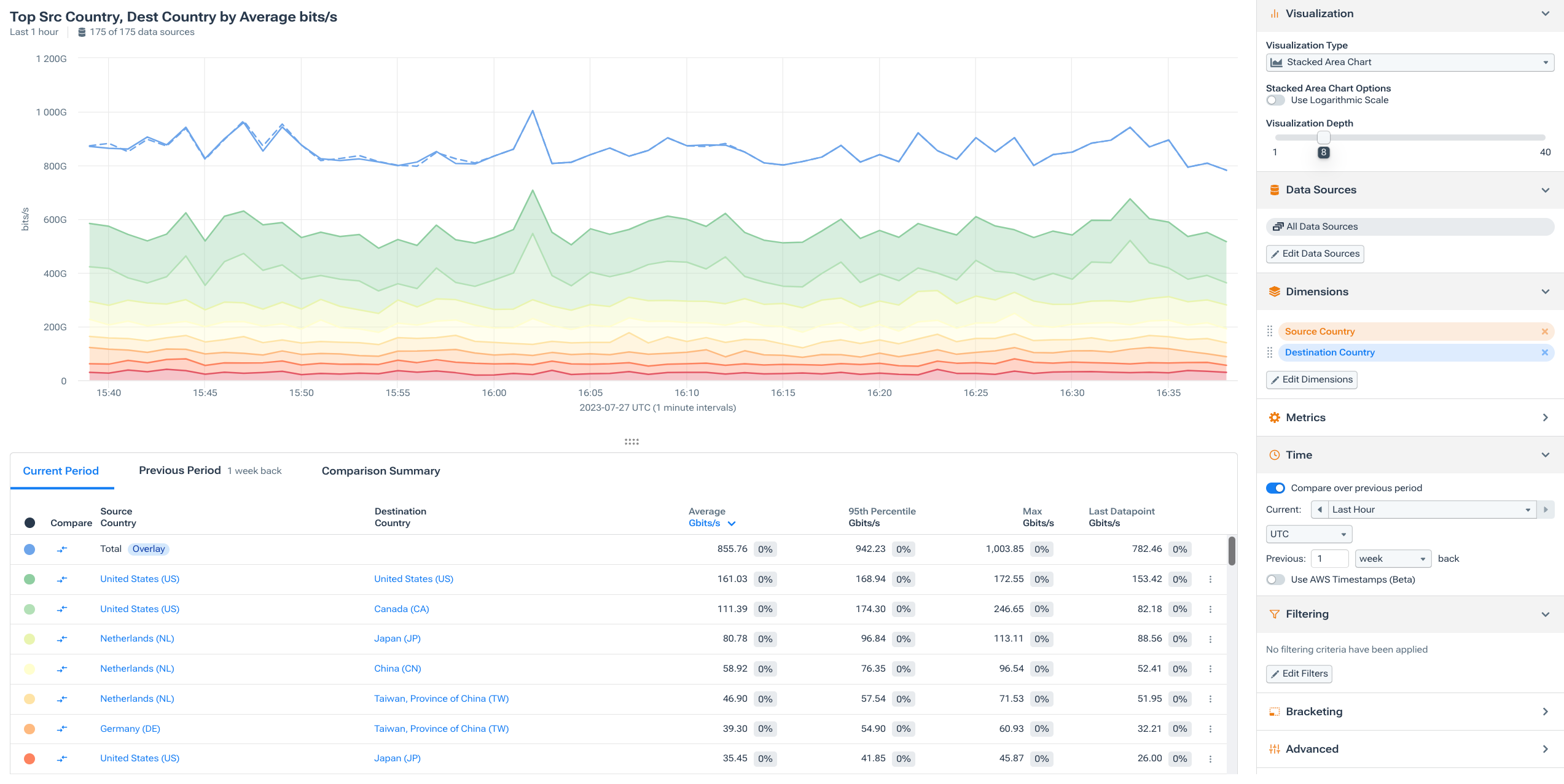

Cloud DDoS detection uses cloud-scale compute and storage to analyze network flow data from on-prem, cloud, and hybrid environments — detecting distributed denial-of-service attacks faster and more accurately than legacy appliance-based approaches. Modern cloud DDoS platforms ingest NetFlow, IPFIX, sFlow, and cloud-native flow logs from AWS, Azure, and GCP into a unified analytics layer, enabling network-wide visibility and automated mitigation regardless of where attacks originate or where workloads run.

What Is Cloud DDoS Detection?

Cloud DDoS detection is the practice of identifying and responding to distributed denial-of-service attacks using cloud-delivered analytics platforms and cloud-native telemetry sources. The term covers two related but distinct ideas: detecting DDoS attacks that target cloud infrastructure, and using cloud-delivered platforms (SaaS) to detect DDoS across any environment including on-prem networks.

Both angles matter for modern NetOps teams. As workloads shift to AWS, Azure, and GCP, the attack surface moves with them — and the telemetry available for detection changes too. At the same time, the architectural advantages of cloud-delivered analytics (elastic scale, no hardware ceilings, centralized visibility) make SaaS platforms the natural home for DDoS detection even for organizations whose infrastructure is primarily on-premises.

The result is a DDoS detection model that’s fundamentally different from the appliance era: instead of deploying dedicated hardware at each network boundary, teams point their flow telemetry — from routers, switches, and cloud environments alike — at a cloud-scale analytics platform that baselines traffic, detects anomalies, and orchestrates mitigation across the entire network.

For a detailed walkthrough of how flow-based detection works in practice, see How to Detect DDoS Attacks Using Flow Analytics.

Kentik in brief: Kentik is the network intelligence platform for cloud and hybrid DDoS defense. Kentik ingests NetFlow, IPFIX, sFlow, and cloud-native flow logs at petabyte scale, correlates them with BGP routing and cloud metadata, and detects DDoS attacks across on-prem, cloud, and hybrid environments from a single SaaS platform. When an attack is detected, Kentik triggers automated mitigation via RTBH, Adaptive FlowSpec, or integrated scrubbing partners including Cloudflare, Radware, Akamai, and A10 Networks.

Learn how to protect your network before an attack causes damage to your customers or reputation.

Why Cloud Environments Need Dedicated DDoS Visibility

Traditional DDoS detection was designed for a world where traffic entered your network through a predictable set of border routers, each exporting NetFlow or IPFIX. Cloud environments break that model in several ways that demand a different approach to visibility.

Shared-responsibility security models mean that the cloud provider offers baseline infrastructure-layer protections and, depending on the platform and architecture, optional native DDoS and application protection services such as AWS Shield, Azure DDoS Protection, and Google Cloud Armor. The customer is still responsible for understanding workload-specific traffic patterns, detecting attacks across their own VPCs, VNets, and accounts, and coordinating response beyond what the provider-native controls handle automatically. Relying solely on native protections can leave gaps in cross-environment visibility, baselining, and operational control.

Ephemeral and elastic workloads — containers, serverless functions, auto-scaling groups — create traffic patterns that change constantly. Baselining “normal” in a cloud environment requires an analytics platform that adapts to shifting infrastructure, not static thresholds tied to fixed interfaces.

East-west traffic between services within a VPC or across VPCs is invisible to perimeter-focused detection. In cloud architectures, a compromised internal workload can generate attack traffic laterally without ever crossing a traditional network boundary. Cloud flow logs are the primary telemetry source for this visibility.

Multi-region and multi-account complexity means that a single organization’s cloud footprint may span dozens of accounts, regions, and VPCs across multiple providers. Without a centralized analytics layer that normalizes and correlates telemetry from all of these sources, attacks targeting one region or account may not be detected in time — or may not be detected at all.

Different telemetry characteristics mean that cloud flow logs behave differently from router-exported flow data. Delivery timing, sampling, field availability, and aggregation intervals vary by provider and configuration. A detection platform that only understands NetFlow may miss patterns that are visible in cloud-native telemetry, or vice versa.

These challenges don’t make cloud environments inherently less defensible — but they do require detection platforms that understand cloud-native telemetry, adapt to dynamic infrastructure, and correlate across environments. That’s the core capability gap that cloud DDoS detection addresses.

Cloud-Native Telemetry for DDoS Detection

Each major cloud provider offers a flow log service that captures traffic metadata at the virtual network level. These logs are the primary raw material for DDoS detection in cloud environments, and understanding their characteristics is important for setting realistic expectations about detection sensitivity and speed.

- AWS VPC Flow Logs capture metadata about traffic flowing to and from network interfaces in a VPC. Logs can be published to CloudWatch Logs, Amazon S3, or Amazon Data Firehose. Fields include source/destination IP, ports, protocol, packets, bytes, and action (accept/reject), and AWS supports custom log formats with additional fields such as TCP flags, traffic type, and flow direction. VPC Flow Logs are aggregated flow records collected over capture windows of 1 minute or 10 minutes, with delivery typically occurring in about 5 minutes to CloudWatch Logs or about 10 minutes to S3 on a best-effort basis. They do not expose a user-configurable packet-sampling setting, but they are not packet-by-packet captures, and some records or traffic types may be omitted.

- Azure virtual network flow logs (the evolution of the earlier NSG Flow Logs) capture traffic at the virtual network level rather than per-network-security-group. They support logging to Azure Storage and integration with Traffic Analytics. Fields include the standard five-tuple plus bytes, packets, and flow state. Azure flow logs include connection state tracking (new, continuing, terminating), which provides richer context for detecting state-exhaustion attacks.

- Google Cloud VPC Flow Logs sample traffic at the VPC level and export metadata including source/destination IP, ports, protocol, bytes, packets, and RTT. Google uses a dynamic primary sampling process that is not user-configurable, followed by a configurable secondary sampling rate. The default secondary rate is 50% for configurations created with the Compute Engine API and 100% for configurations created with the Network Management API. Logs are delivered through Cloud Logging and can be exported to BigQuery, Pub/Sub, or Cloud Storage.

For DDoS detection, the most important practical differences between these sources are delivery latency (how quickly logs arrive at the analytics platform after traffic occurs), sampling rate (whether every flow is logged or only a statistical sample), and field richness (whether TCP flags, flow direction, and connection state are available). These variables directly affect how fast an attack can be detected and how precisely it can be classified.

The good news is that volumetric and reflection attacks — the most common DDoS types — generate enough traffic volume to produce clear signals even in sampled cloud flow logs. Where cloud telemetry is less precise is with low-rate application-layer attacks, where the signal may be diluted by sampling or delayed by aggregation windows.

For a deeper dive into VPC flow logs across providers, see What Are VPC Flow Logs?.

Cloud-Delivered vs. Appliance-Based DDoS Detection

The original promise of cloud DDoS detection — that cloud-scale compute and storage would outperform fixed-capacity appliances — has been validated by a decade of production deployments. But the advantages go beyond raw scale.

No hardware ceilings

Appliance-based DDoS detection (such as NETSCOUT Arbor Sightline) runs on dedicated servers with fixed memory and compute. As flow volumes grow — more devices, more interfaces, more cloud VPCs — the appliance eventually hits capacity limits that require hardware upgrades or additional units. Cloud-delivered platforms scale elastically with the data, ingesting telemetry from thousands of sources without capacity planning on the customer’s side. For a detailed comparison of flow-based and appliance-based detection methodologies, see How to Detect DDoS Attacks Using Flow Analytics.

Faster time to value

Appliances require procurement, racking, configuration, and ongoing maintenance at each deployment site. SaaS DDoS detection requires pointing flow exports (and cloud flow log integrations) at the platform — typically a configuration change that can be completed in hours, not weeks. This is especially significant for organizations expanding into new cloud regions, onboarding acquisitions, or adding monitoring to previously unprotected parts of the network.

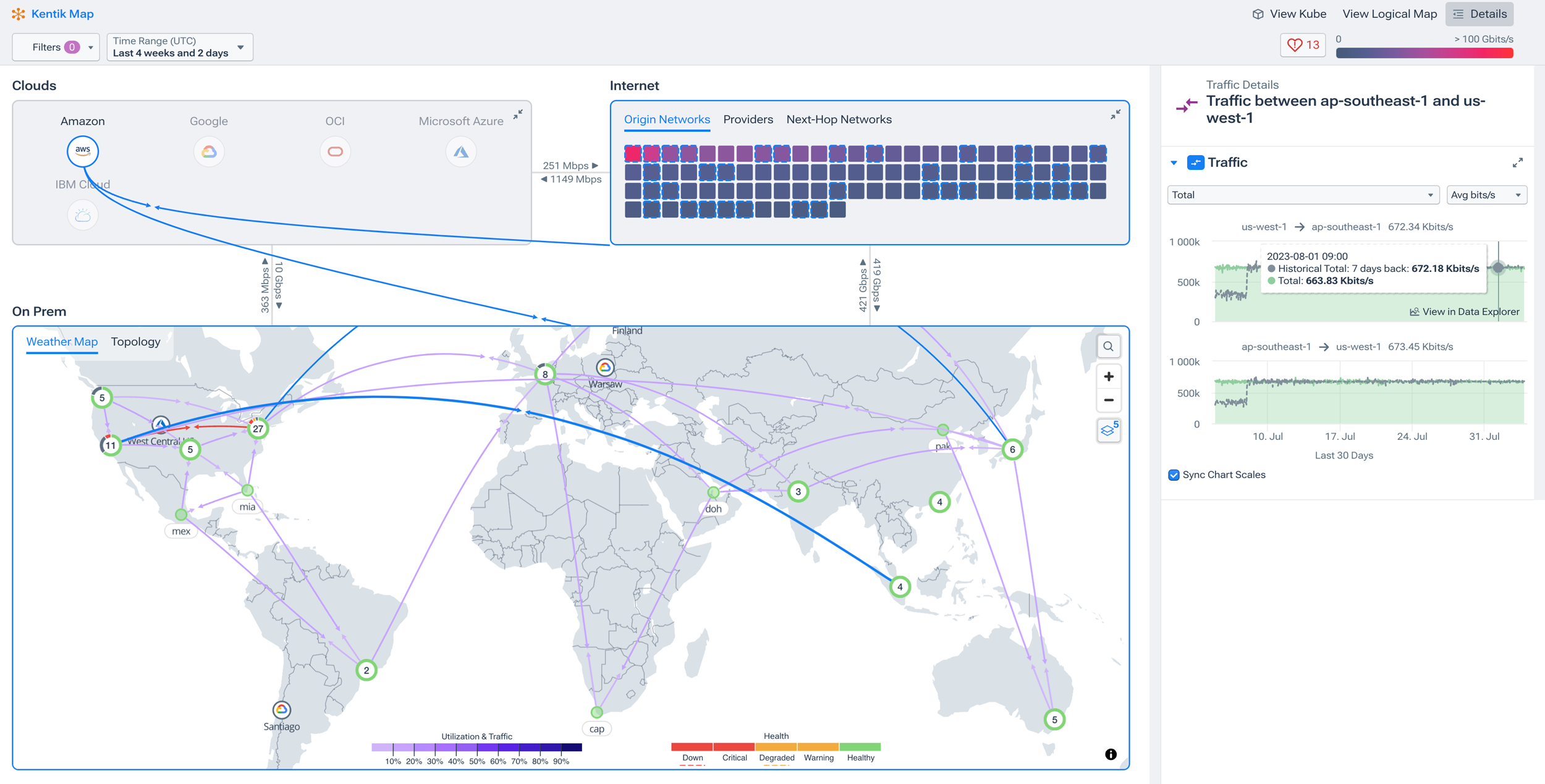

Centralized, correlated visibility

This is arguably the most important advantage for DDoS detection specifically. An attack that enters your network through a peering point, traverses your backbone, and targets a workload running in AWS looks different at each observation point. Appliance-based architectures require correlation across multiple devices; cloud-delivered platforms see all telemetry — on-prem NetFlow, cloud flow logs, BGP routing data — in one place, making attack detection and path analysis inherently simpler.

Continuous improvement without upgrades

Cloud platforms ship detection improvements, new attack signatures, and ML model updates continuously. Customers benefit from these improvements without scheduling maintenance windows, upgrading firmware, or validating compatibility. In a threat landscape where attack techniques evolve weekly, this continuous delivery model matters.

Where cloud-native protections fit

It’s worth noting that cloud-native DDoS protections, including AWS Shield, Azure DDoS Protection, and Google Cloud Armor, are complementary to, not replacements for, a unified detection platform. These services can provide valuable mitigations and provider-specific metrics, alerts, or attack visibility for the resources they protect, but they operate within the boundaries and data models of a single cloud. A dedicated detection platform adds cross-environment baselining, unified attack analysis across on-prem and multi-cloud telemetry, and orchestration across provider-native controls, routers, and third-party scrubbing services. The strongest architectures layer both.

For a broader view of DDoS protection strategies and tools, see DDoS Protection and Mitigation: A 2026 Guide.

Protecting Hybrid and Multi-Cloud Networks from DDoS

Most organizations don’t live in a single environment. They have workloads in AWS, Azure, or GCP alongside on-prem data centers, colocation facilities, and SD-WAN edges — and DDoS attacks don’t respect those boundaries. A volumetric flood might enter through a transit peer, cross your backbone, and target a cloud-hosted application. A reflection attack might hit your on-prem peering edge and your cloud load balancers simultaneously.

Defending this kind of environment requires a detection platform that treats all telemetry as part of a single data set. In practice, that means ingesting on-prem flow data (NetFlow, IPFIX, sFlow) and cloud-native flow logs (from every cloud account, region, and VPC) into one analytics backend, normalizing the different formats, enriching them with routing and cloud metadata, and running anomaly detection across the unified view.

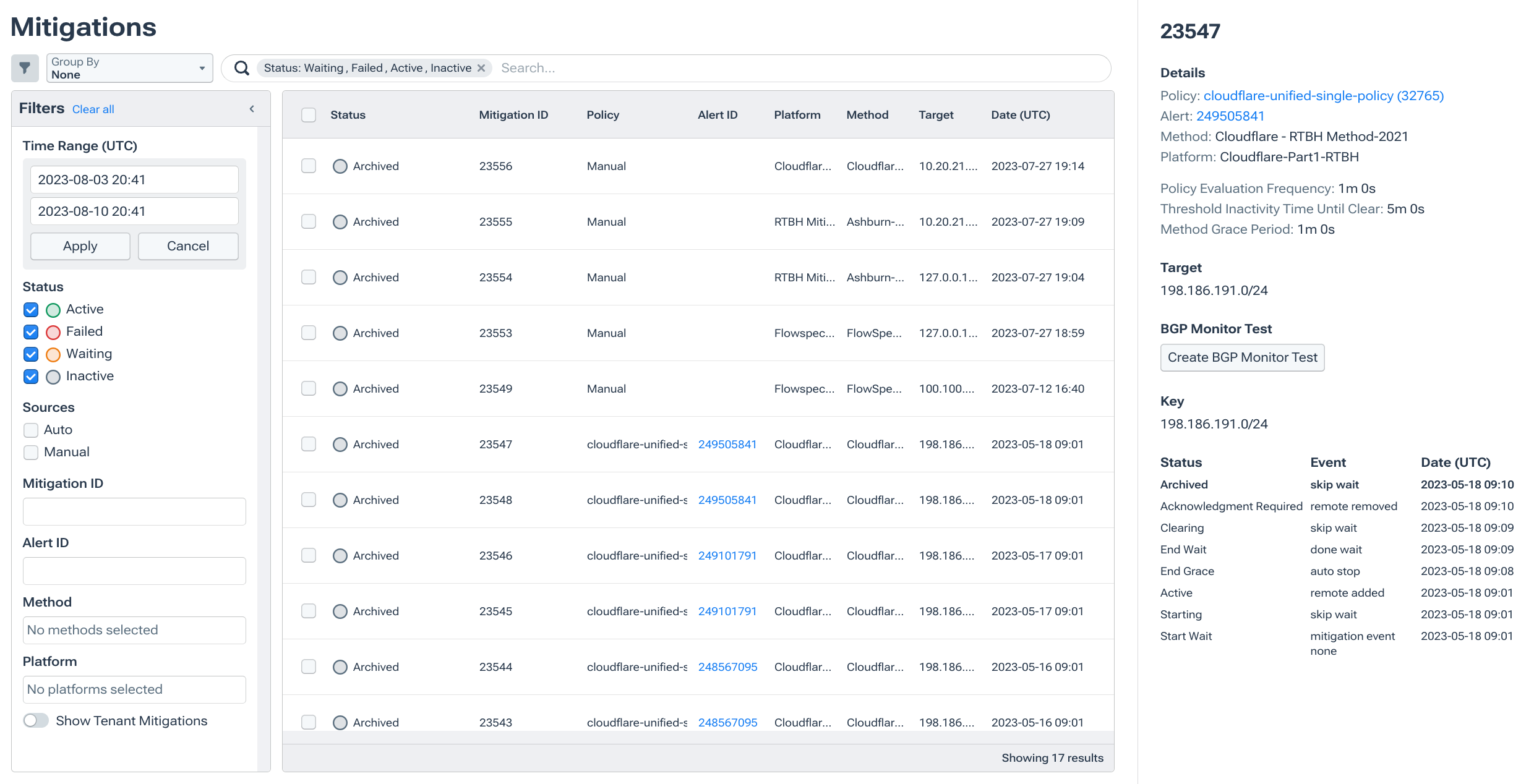

The mitigation side is equally important. When a hybrid attack is detected, the response may need to span multiple domains: triggering RTBH or FlowSpec on your border routers to drop traffic at the network edge, leveraging already-enabled cloud-native protections such as AWS Shield Advanced, Azure DDoS Protection, or Google Cloud Armor for cloud-exposed workloads, and diverting to a scrubbing provider like Cloudflare Magic Transit for volumetric floods that exceed on-network capacity. A cloud DDoS detection platform that can orchestrate across these mechanisms, rather than requiring separate tools for each, dramatically reduces response time and operational complexity.

For more on how modern platforms like Kentik compare to legacy appliance-based DDoS vendors, see NETSCOUT Arbor Alternatives.

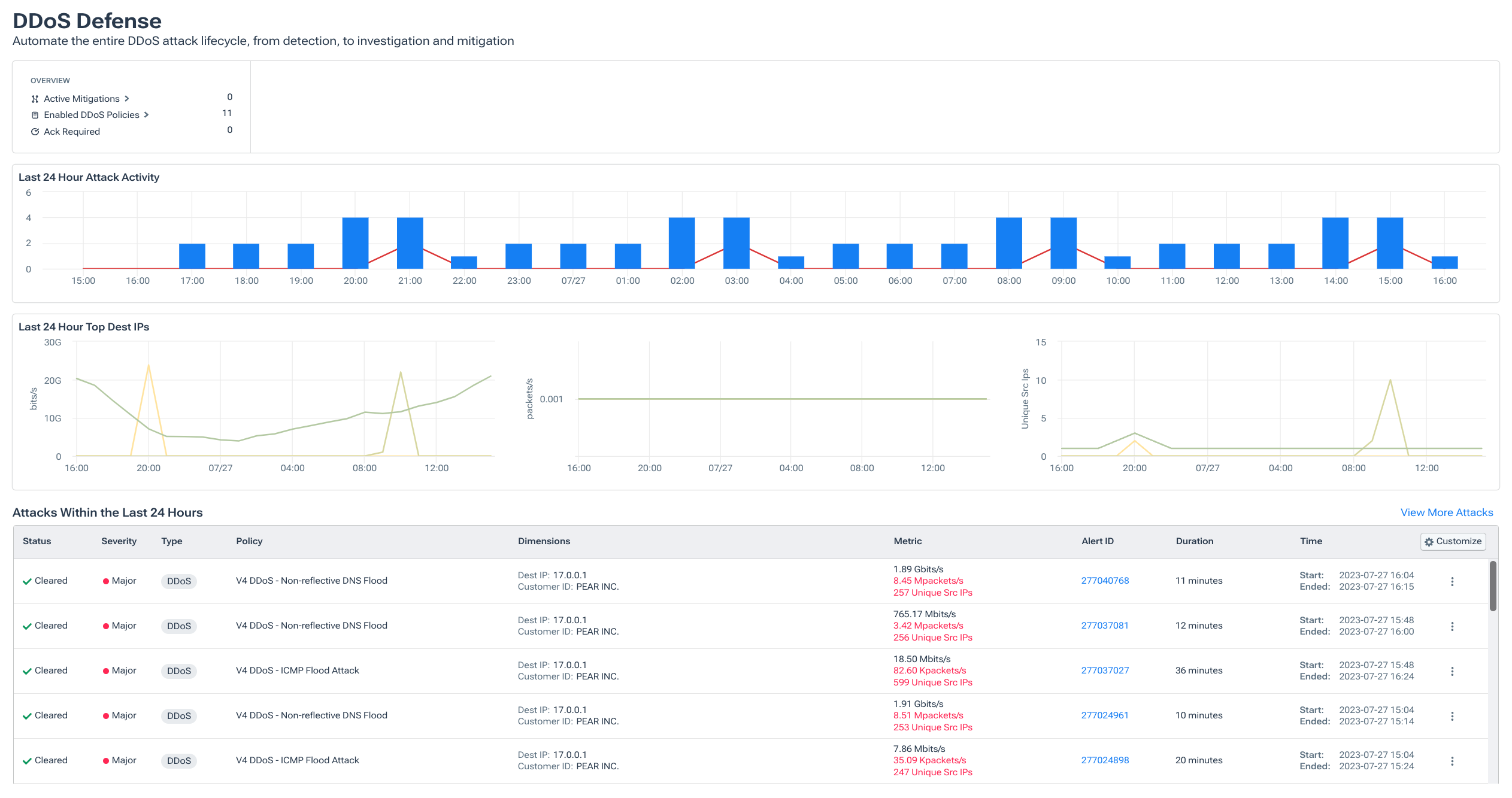

What to Look for in a Cloud DDoS Detection Platform

If you’re evaluating platforms for DDoS detection across cloud and hybrid environments, these capabilities matter most:

- Cloud-native flow log ingestion. The platform should natively ingest AWS VPC Flow Logs, Azure virtual network flow logs, and Google Cloud VPC Flow Logs — not just accept them as a generic syslog input. Native integration means the platform understands provider-specific fields, sampling behavior, and metadata.

- Multi-cloud and hybrid support. You need a single analytics layer that normalizes telemetry from on-prem routers (NetFlow, IPFIX, sFlow) and multiple cloud providers simultaneously, so attacks that span environments are detected as a single event, not separate incidents in separate tools.

- Cloud metadata enrichment. Raw flow data becomes far more useful when enriched with cloud context: account/project, VPC/VNet, region/availability zone, subnet, security group, instance ID, and tags. This enrichment is what lets you answer “which workload is being targeted?” rather than just “which IP is being hit?”

- Dynamic baselining for elastic environments. Cloud traffic patterns shift as workloads scale up, scale down, or redeploy. Static thresholds generate false positives in dynamic environments. Look for platforms that adapt baselines automatically as infrastructure changes.

- Automated mitigation orchestration. The platform should trigger mitigation across both on-prem controls (RTBH, FlowSpec) and cloud-native or third-party protections (scrubbing providers, cloud DDoS services) based on configurable policies — ideally from a single workflow rather than requiring separate tools for each environment.

- AI-assisted investigation. During a cross-environment attack, speed and clarity matter. Platforms that use AI to correlate signals across cloud and on-prem telemetry, classify attack types, and surface recommended mitigation actions help teams respond faster, especially when the attack spans unfamiliar cloud territory.

Related Articles

- How to Detect DDoS Attacks Using Flow Analytics — Practical guide to flow-based DDoS detection methodology, workflows, and automated response.

- DDoS Detection — Conceptual overview of in-line vs. out-of-band detection and the role of big-data architecture.

- DDoS Protection and Mitigation: A 2026 Guide — Comprehensive guide to DDoS protection strategies, tools, and best practices.

- What Are VPC Flow Logs? — Deep dive into cloud-native flow log sources across AWS, Azure, and GCP.

- NETSCOUT Arbor Alternatives — How modern platforms compare to legacy appliance-based DDoS defense.

- Best Network Monitoring Tools for 2026 — Roundup of leading network monitoring and observability platforms.

FAQs about Cloud DDoS Detection and Protection

What is cloud DDoS detection?

Cloud DDoS detection is the practice of identifying distributed denial-of-service attacks using cloud-delivered analytics platforms and cloud-native telemetry sources. It encompasses both detecting attacks that target cloud workloads (using VPC flow logs and cloud metadata) and using SaaS-delivered platforms to detect DDoS across any environment — on-prem, cloud, or hybrid.

How do I detect DDoS attacks in AWS, Azure, and GCP?

Each major cloud provider offers flow logs (AWS VPC Flow Logs, Azure virtual network flow logs, Google Cloud VPC Flow Logs) that capture traffic metadata at the virtual network level. By ingesting these logs into a flow analytics platform, you can baseline normal traffic patterns, detect volumetric and protocol-level anomalies, and trigger automated mitigation. Kentik supports native integration with all three providers as part of a unified detection workflow.

What are VPC Flow Logs and how are they used for DDoS detection?

VPC Flow Logs are records of traffic metadata — source/destination IPs, ports, protocols, packet and byte counts — captured at the virtual network level in cloud environments. For DDoS detection, they serve the same role that NetFlow and IPFIX serve in on-prem networks: they provide the raw telemetry that analytics platforms use to baseline traffic, detect anomalies, and classify attacks. For a comprehensive overview, see What Are VPC Flow Logs?.

What’s the difference between cloud-delivered and appliance-based DDoS detection?

Appliance-based detection runs on dedicated hardware at each network boundary, processing flow data locally with fixed compute and storage capacity. Cloud-delivered detection ingests flow telemetry from across the entire network into a centralized SaaS platform with elastic scale. The cloud approach offers broader visibility, faster deployment, no hardware maintenance, and the ability to correlate on-prem and cloud telemetry in one view. Most modern architectures favor cloud-delivered detection with targeted appliance or cloud-native controls for mitigation.

Can I detect DDoS across hybrid and multi-cloud environments from one platform?

Yes. Modern cloud DDoS detection platforms ingest on-prem flow data (NetFlow, IPFIX, sFlow) alongside cloud-native flow logs from AWS, Azure, and GCP, normalizing them into a single unified view. This makes it possible to detect attacks that span environments — for example, a volumetric flood entering through a transit peer and targeting a cloud-hosted application — as a single correlated event rather than separate alerts in separate tools.

How does cloud DDoS detection trigger mitigation?

When a cloud DDoS detection platform identifies an attack, it can coordinate mitigation across multiple domains: RTBH or BGP FlowSpec on border routers for on-prem traffic, already-enabled cloud-native protections such as AWS Shield Advanced, Azure DDoS Protection, or Google Cloud Armor for cloud-exposed workloads, and traffic diversion to third-party scrubbing providers such as Cloudflare Magic Transit, Akamai Prolexic, or Radware. Kentik supports these mitigation paths and can automate the response based on attack characteristics and configurable policies.

Do I still need cloud-native DDoS protections if I have a detection platform?

Yes. Cloud-native protections like AWS Shield, Azure DDoS Protection, and Google Cloud Armor can provide always-on mitigation and, in some cases, provider-specific metrics, alerts, and attack telemetry. A dedicated detection platform adds something different: cross-environment visibility, unified baselining across on-prem and multi-cloud telemetry, deeper correlation with routing and cloud metadata, and orchestration across multiple mitigation domains. The strongest architectures layer both, with provider-native controls as the first line of defense and a SaaS detection platform providing the broader intelligence and coordination layer.

How does cloud DDoS detection handle the shared-responsibility model?

In cloud security’s shared-responsibility model, the provider protects the infrastructure layer while the customer is responsible for their workloads and data. Cloud DDoS detection fills the customer’s side of that responsibility by providing visibility into traffic patterns within and across VPCs, detecting application-layer and multi-vector attacks that cloud-native protections don’t cover, and orchestrating response across the customer’s full environment. Without a dedicated detection platform, the customer is relying entirely on the cloud provider’s built-in protections, which may not be sufficient for sophisticated or targeted attacks.

What should service providers look for in a cloud DDoS detection platform?

Service providers need multi-tenant support (monitoring many customers from one platform), per-customer baselining and alerting, automated mitigation orchestration across RTBH/FlowSpec and third-party scrubbing, and the ability to ingest telemetry from both their own backbone and their customers’ cloud environments. Platforms that also support peering analytics, traffic engineering, and cost optimization — alongside DDoS detection — reduce the total number of tools required.

How does Kentik handle cloud DDoS detection?

Kentik ingests cloud-native flow logs from AWS, Azure, and GCP alongside on-prem NetFlow, IPFIX, and sFlow into a single big-data backend. It enriches all telemetry with cloud metadata (account, VPC, region, instance, tags) and BGP routing context, builds dynamic per-customer and per-prefix baselines, and detects anomalies across the unified data set. When an attack is identified, Kentik orchestrates mitigation via RTBH, Adaptive FlowSpec, or API-driven diversion to scrubbing partners — all from one platform.

Protect Cloud and Hybrid Networks from DDoS with Kentik

Kentik delivers cloud-scale DDoS detection and automated response across on-prem, cloud, and hybrid environments — so your team stops attacks faster without deploying appliances.

- Get a demo and see cloud DDoS detection in action

- Explore Kentik’s DDoS solutions page for product details and customer stories

- Browse our Network Security Learning Center for a comprehensive guide to modern DDoS defense

- Start a free trial to test Kentik with your own flow data