Latency vs Throughput vs Bandwidth: What They Mean and How to Monitor Them

As enterprise and internet service provider networks become more complex and users increasingly rely on seamless connectivity, understanding and optimizing the speed of data transfers within a network has become crucial. Latency, throughput, and bandwidth are key network performance metrics that provide insights into a network’s “speed.” Yet, these terms often lead to confusion as they are intricately related but fundamentally different. This article will shed light on these concepts and delve into their interconnected dynamics.

Latency vs throughput vs bandwidth at a glance

- Latency: The time it takes for a packet to travel from source to destination (measured in milliseconds)

- Throughput: The amount of data successfully delivered over time (measured in bits per second)

- Bandwidth: The maximum theoretical data transfer capacity of a link (measured in bits per second)

- Key relationship: High bandwidth enables high throughput, but high latency or packet loss can reduce actual throughput well below available bandwidth

- Why it matters for NetOps: Distinguishing between these three metrics is essential for diagnosing whether a performance problem requires more capacity, a better path, or a different fix entirely

Network Speed: Three Important Metrics

Network speed is often a blanket term for how fast data can travel through a network. However, it is not a singular, one-dimensional attribute but a combination of several factors. Three primary metrics defining network speed are latency, throughput, and bandwidth:

Latency

Latency refers to the time it takes for a packet of data to traverse from one point in the network to another. It is a measure of delay experienced by a packet, indicating the speed at which data travels across the network. Latency is often measured as round-trip time, which includes the time for a packet to travel from its source to its destination and for the source to receive a response. A network with low latency experiences less delay, thereby seeming faster to the end user.

Throughput

Throughput, on the other hand, is the amount of data that successfully travels through the network over a specified period. It reflects the network’s capacity to handle data transfer, often seen as the actual speed of the network. Throughput depends on several factors, including the network’s physical infrastructure, the number of concurrent users, and the type of data being transferred.

Bandwidth

Bandwidth is the maximum data transfer capacity of a network. It defines the theoretical limit of data that can be transmitted over the network in a given time. Bandwidth is often compared to the width of a road, determining the maximum number of vehicles (or data packets in a network) that can travel simultaneously.

The Relationship Between Latency, Throughput, and Bandwidth

While latency, throughput, and bandwidth are distinct concepts, their interrelationships are pivotal in understanding network speed. They are often viewed together to assess a network’s performance.

Although maximum bandwidth indicates the highest possible data transfer capacity, it doesn’t necessarily translate to the speed at which data moves in the network. That’s where throughput comes in — it reflects the actual data transfer rate.

Latency is another crucial factor that impacts the speed at which data is delivered, regardless of throughput. High latency can slow down data delivery even on a high-throughput network because data packets take longer to reach their destination. Conversely, lower latency allows data to reach its destination more quickly, making the network feel faster to users even if the throughput is not exceptionally high.

In certain scenarios, latency and throughput can exhibit an inversely proportional relationship. For example, a network that’s optimized for high throughput might achieve this by processing data more efficiently, thus reducing latency. However, it’s important to understand that this isn’t a strict relationship. There are instances where a network might have high throughput but also high latency, and vice versa.

This relationship can be complicated by various factors. For example, a network with high bandwidth can still experience low throughput if the latency is high due to network congestion, inefficient routing, or physical distance. Likewise, a network with high throughput might still deliver a poor user experience if the network suffers from high latency.

Understanding the distinctions and connections between latency, throughput, and bandwidth is essential in measuring, monitoring, and optimizing network speed. In the subsequent sections, we’ll delve deeper into these concepts and explore some of the ways network professionals can effectively manage them using Kentik’s network intelligence platform.

Kentik in brief: Kentik helps teams measure and optimize latency, throughput, and bandwidth by unifying interface and cloud metrics, flow analytics, and synthetic tests across on-prem, cloud, and internet paths. Kentik makes it easier to distinguish “we need more bandwidth” from “we need a better path” or “we have loss/jitter problems,” so fixes are evidence-based instead of guesswork.

Learn how AI-powered insights help you predict issues, optimize performance, reduce costs, and enhance security.

What is Bandwidth?

Bandwidth is a fundamental element of network performance, representing a network’s maximum data transfer capacity. Imagine it as a highway: the wider it is, the more vehicles (or data packets) it can accommodate simultaneously. High bandwidth indicates a broader channel, allowing a greater volume of data to be transmitted at once, leading to potentially faster network speeds.

Bandwidth is typically measured in bits per second (bps), with modern networks often operating at gigabits per second (Gbps) or even terabits per second (Tbps) scales. This measurement describes the volume of data that can theoretically pass through the network in a given time frame.

However, merely having high bandwidth doesn’t ensure optimal network performance — it’s the potential capacity, not always the realized speed. Factors like network congestion, interference, or faulty equipment can reduce the effective bandwidth below the maximum. That’s where bandwidth monitoring becomes essential. NetOps professionals can preemptively address potential issues by tracking bandwidth usage, ensuring that actual data transfer rates don’t lag behind the available bandwidth.

What is Latency?

Latency is a critical aspect of network performance, representing the delay experienced by data packets as they travel from one point in a network to another. It is the time it takes for a message or packet of data to go from the source to the destination and, in some cases, back again (known as round-trip time). Latency significantly impacts the user’s perception of network speed; a network with lower latency often feels faster and provides a smoother, more responsive user experience. This is crucial for real-time applications like video conferencing, gaming, and live streaming.

What Causes Network Latency?

Several factors can influence latency, including:

- Distance: The physical distance between the source and destination significantly affects latency. Data packets travel through various mediums (e.g., cables), and the farther they have to go, the more time it takes.

- Network congestion: Congestion in a network can cause delays and increase latency, akin to how traffic congestion slows down vehicle movement.

- Hardware and infrastructure: The performance and efficiency of network hardware, such as routers and switches, as well as the quality of the network infrastructure, can impact latency.

- Transmission mediums: The medium of data transmission, whether it’s fiber optic cables, copper cables, or wireless signals, also influences latency (e.g., fiber optic cables typically offer lower latency than other mediums).

- Number of hops: Each time a data packet passes from one network point to another, it’s considered a “hop.” More hops mean that data packets have more stops to make before reaching their destination, which can increase latency. The number of hops a packet makes can change based on the network’s configuration, topology, and path chosen for data transfer.

How is Latency Measured, Tested and Monitored?

Latency is typically measured in milliseconds (ms) and can be evaluated using methods like ping and traceroute tests. These tests send a message to a destination and measure the time it takes to receive a response.

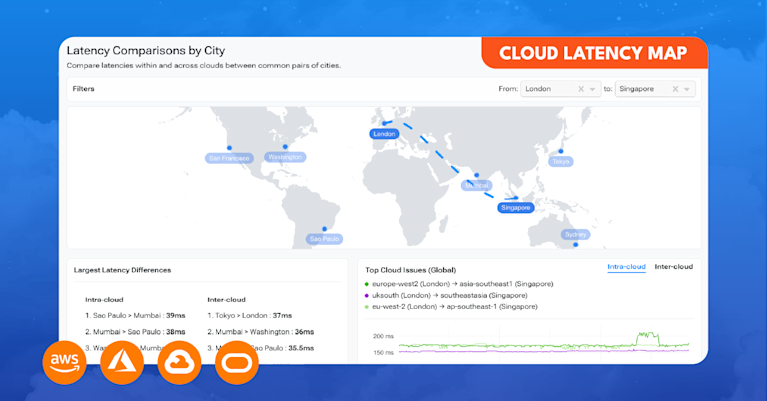

Advanced network performance platforms like Kentik Synthetics provide robust tools for continuous latency testing and monitoring. Through continuous ping and traceroute tests performed with software agents located globally, Kentik Synthetics measures essential metrics such as latency, jitter, and packet loss. These metrics are pivotal for evaluating network health and performance.

Kentik Synthetics’ approach to latency evaluation involves comparing current latency values with baseline values, representing the average of the prior 15 time slices (with time slice value depending on the selected time range being observed). The system uses this information to assign latency a status level — Healthy, Warning, or Critical — based on how far it deviates from the baseline. This approach enables prompt identification and remediation of latency issues before they substantially impact network performance or user experience.

Kentik Synthetics also offers insights such as “Latency Hop Count” which analyzes changes in the hop count between the source agent and the test target and its relation to latency. This type of analysis can help identify the key factors contributing to poor network performance. Network professionals can rapidly identify and address issues that lead to increased latency by visualizing the relationship between test latency and the number of hops.

What is Throughput?

Throughput measures how much data can be transferred from one location to another in a given amount of time. In the context of networks, it’s the rate at which data packets are successfully delivered over a network connection. It is often measured in bits per second (bps), kilobits per second (Kbps), megabits per second (Mbps), or gigabits per second (Gbps). High throughput means more data can be transferred in less time, contributing to better network performance and a smoother user experience.

Several factors can influence throughput, including:

- Bandwidth: Bandwidth is the maximum capacity of a network connection to transfer data. Higher bandwidth typically enables higher throughput. However, bandwidth is only a potential rate, and the actual throughput could be lower due to other factors.

- Latency: Higher network latency can reduce throughput because it takes longer for data packets to travel across the network.

- Packet loss: When data packets fail to reach their destination, the sending device often needs to resend them, which can lower the overall throughput.

- Network congestion: When many data packets are trying to travel over the network simultaneously, it can lead to congestion and a decrease in throughput.

- Hardware and infrastructure: The performance and efficiency of network hardware, such as routers and switches, can impact throughput.

Why is Throughput Important?

Understanding throughput is crucial for managing network performance and capacity planning. If the throughput of a network connection frequently reaches its maximum capacity, it may cause network congestion and reduce the quality of the user experience. Monitoring throughput over time helps network professionals identify when it’s time to upgrade their network infrastructure.

In the realm of network capacity planning, throughput is closely related to the metric of utilization. Utilization represents the ratio of the current network traffic volume to the total available bandwidth on a network interface, typically expressed as a percentage. It’s an essential indicator of how much of the available capacity is being used. High utilization may lead to network bottlenecks and poor performance, highlighting the need for capacity upgrades or better traffic management.

Tools like Kentik’s network capacity planning tool offer a comprehensive overview of key metrics such as utilization and runout (an estimate of when the traffic volume on an interface will exceed its available bandwidth). These insights can help organizations to foresee future capacity needs, mitigate congestion, and maintain high-performance networks.

Latency vs Throughput: What’s the Difference?

While both latency and throughput are key metrics for measuring network performance, they represent different aspects of how data moves through a network.

Latency is about time: it measures the delay experienced by a data packet as it travels from one point in a network to another. Lower latency means data packets reach their destination more quickly, which is crucial for real-time applications like video calls and online gaming. In these contexts, even a slight increase in latency can lead to a noticeable decrease in performance and user experience.

On the other hand, throughput is about volume: it measures the amount of data that can be transferred from one location to another in a given amount of time. Higher throughput means more data can be transferred in less time, contributing to faster file downloads, smoother video streaming, and better overall network performance.

While these two concepts are related — high latency can reduce throughput by slowing down data transmission, and high throughput can sometimes reduce latency by processing more data packets simultaneously — they don’t always correlate perfectly. A network can have low latency but also low throughput if its infrastructure cannot handle a large volume of data, just as it can have high throughput but also high latency if data packets take a long time to reach their destination due to factors such as network congestion, number of hops, or physical distance.

Understanding the difference between latency and throughput — and how they can impact each other — is crucial for network performance optimization. NetOps professionals must monitor both metrics to ensure they deliver the best possible service. For example, a network that primarily serves real-time applications may prioritize minimizing latency, while a network that handles large file transfers may prioritize maximizing throughput.

Throughput vs Bandwidth: Theoretical Packet Delivery vs Actual Packet Delivery

In the context of network performance, throughput and bandwidth are terms that are often misunderstood and used interchangeably. However, they represent different aspects of a network’s data handling capacity.

As mentioned earlier, bandwidth is the maximum theoretical data transfer capacity of a network. It’s like the width of a highway; a wider highway can potentially accommodate more vehicles at the same time. However, just because a highway has six lanes doesn’t mean it’s always filled to capacity or that traffic is flowing smoothly.

Throughput, on the other hand, is the actual rate at which data is successfully transmitted over the network, reflecting the volume of data that reaches its intended destination within a given timeframe. Returning to our highway analogy, throughput would be akin to the number of vehicles that reach their destination per hour. Various factors, like road conditions, traffic accidents, or construction work (analogous to network conditions, packet loss, or latency), can impact this number.

Throughput vs Bandwidth: Optimal Network Performance

In an ideal scenario, throughput would be equal to bandwidth, but real-world conditions often prevent this from being the case. Maximizing throughput up to the limit of available bandwidth while minimizing errors and latency is a primary goal in network optimization.

A network with ample bandwidth can still have low throughput if it suffers from high latency or packet loss. Therefore, for optimal network performance, both bandwidth (maximum transfer capacity) and throughput (actual network traffic volume) must be effectively managed and monitored.

Understanding these relationships is the foundation of effective network performance management. But knowing the definitions isn’t enough — teams need tools that measure all three metrics continuously and correlate them with traffic context, routing changes, and user experience. That’s where network intelligence comes in.

Related Kentipedia Articles

- Understanding Latency, Packet Loss, and Jitter in Network Performance

- Network Performance Monitoring (NPM)

- Network Performance Monitoring Metrics

- Bandwidth Utilization Monitoring: Best Practices for NetOps Professionals

- Network Capacity Planning

FAQs about Latency, Throughput, and Bandwidth

What is the difference between latency, throughput, and bandwidth?

Latency is the time it takes for a packet to travel from source to destination. Throughput is the amount of data successfully delivered over time. Bandwidth is the maximum theoretical capacity of a link. Together, these three metrics describe different dimensions of network speed.

Can a network have high bandwidth but low throughput?

Yes. A link may have plenty of available capacity but still deliver low throughput if latency is high, packet loss is occurring, or congestion is causing retransmissions. Bandwidth is the ceiling; throughput is what you actually get.

Does increasing bandwidth always fix slow network performance?

Not always. If the root cause is high latency (due to distance, routing inefficiency, or congestion) or packet loss, adding bandwidth won’t help. Teams need to identify whether the problem is capacity, path, or quality before choosing a fix.

How do latency and throughput affect each other?

High latency can reduce throughput because protocols like TCP wait for acknowledgments before sending more data. On high-latency links, the send window fills up and transmission stalls — even when bandwidth is available. Techniques like TCP window scaling and selective acknowledgments (SACKs) help mitigate this.

What is a good latency for network performance?

It depends on the application. Real-time services like video conferencing and gaming are sensitive to latency above 50–100 ms. Bulk data transfers are less latency-sensitive but still affected because high latency reduces TCP throughput. The important thing is to measure latency continuously and compare it against baseline values for your specific services.

How do you measure latency, throughput, and bandwidth?

Latency is typically measured using ping (ICMP) and traceroute tests, reported in milliseconds. Throughput is measured by tracking actual data transfer rates over time using flow analytics or speed tests. Bandwidth is determined by the physical or provisioned capacity of a link. Tools like Kentik Synthetics continuously measure latency, jitter, and packet loss, while Kentik NMS tracks interface utilization and throughput.

What causes high latency in a network?

Common causes include physical distance between endpoints, network congestion, inefficient routing (too many hops or suboptimal paths), overloaded network devices, and the characteristics of the transmission medium. Identifying the specific cause requires correlating latency measurements with path data and traffic patterns.

What is the relationship between utilization and throughput?

Utilization is the percentage of a link’s available bandwidth currently in use — essentially throughput expressed as a fraction of capacity. High utilization means throughput is approaching the link’s bandwidth limit, which can indicate congestion risk. Monitoring utilization helps teams plan capacity upgrades before links saturate.

How does network intelligence help optimize latency, throughput, and bandwidth?

Network intelligence platforms like Kentik correlate interface metrics, flow analytics, synthetic tests, and routing data to distinguish between different causes of poor performance. Instead of guessing whether you need more bandwidth or a better path, you can see the evidence — which traffic is driving utilization, where latency is introduced along a path, and whether routing changes would improve throughput more effectively than capacity upgrades.

What tools monitor latency, throughput, and bandwidth across hybrid and multi-cloud networks?

Modern network intelligence platforms combine synthetic testing (for latency, jitter, and loss), device metrics via SNMP and streaming telemetry (for utilization and throughput), and flow analytics (for traffic context). Kentik unifies all three across on-prem, cloud, and internet paths in a single platform, with AI-assisted investigation to accelerate troubleshooting.

Measure and Optimize Latency, Throughput, and Bandwidth with Kentik

Kentik is the network intelligence platform that helps teams move from “the network is slow” to evidence-based answers about what’s actually happening — and what to do about it.

- Get a demo — See how Kentik measures latency, throughput, and utilization across hybrid networks

- Kentik Synthetics — Continuous latency, jitter, and packet loss testing from global and private agents

- Network Performance Monitoring — End-to-end visibility across data center, cloud, and internet paths

- Capacity Planning — Forecast bandwidth demand and plan upgrades before links saturate

- Kentik NMS — Modern device and interface monitoring with SNMP and streaming telemetry